Research Article | Open

Access

|

Open

Access

|

| Published online: 11 March 2026

VISTA.AI: Voice-Based Interactive System for Transformative Assistance via Holographic Display

| Published online: 11 March 2026

VISTA.AI: Voice-Based Interactive System for Transformative Assistance via Holographic Display

Gaurang Jagtap, Siddhant Jawalekar,

Manasvi Khandwe,*

Yukta Khushalani,

Aditi Kolhapure

and Vaishali Rajput

Department of Artificial Intelligence and Data Science, Vishwakarma Institute of Technology, Pune, Maharashtra, 411037, India

*Email: manasvi.khandwe241@vit.edu (M. Khandwe)

J. Inf. Commun. Technol. Algorithms Syst. Appl., 2026, 2(1), 26302 https://doi.org/10.64189/ict.26302

Received: 19 January 2026; Revised: 27 February 2026; Accepted: 09 March 2026

Abstract

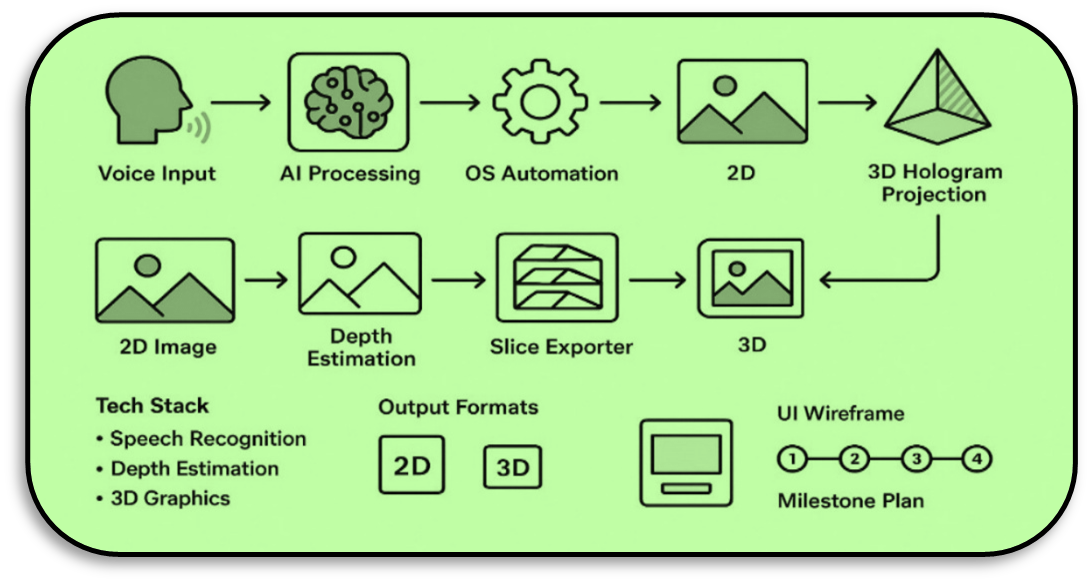

The growing demand for intelligent automation has led to the rapid development of AI-powered assistants. However, these assistants have been limited to voice communication and working on a 2D screen. The main limitation of such systems exists at the level of user engagement. This paper introduces a model called VISTA.AI, a type of AI-powered assistive technology that overcomes all previous limitations in terms of functionality, uses voice commands for desktop operations, and designs hologram-ready visuals. The proposed system records voice commands through a microphone and processes them using natural language processing (NLP). The interpreted commands are then used to perform operating-system-level tasks.The model is designed using Blender-based modelling to generate extrusions and depth mapping. These elements are then used to create 2D visuals such as text, graphs, and icons, which are projected as 3D hologram-ready images. These frames are projected using a Pepper's Ghost setup, thereby creating floating holographic images. The experimental evaluation under controlled lighting conditions reveals high recognition accuracy, efficient automation of OS, and good-quality holographic outputs. VISTA.AI integrates multimodal interactions with OS-level control and low-cost holographic projection. This significantly enhances the capabilities of traditional AI assistants.Its modular architecture will allow gesture-based control, volumetric holography, integration into smart environments, and compatibility with AR/VR to be added in the future. This work presents a pathway toward the realization of Jarvis-like interfaces in a practical way by developing a scalable and reasonably priced framework that highlights immersive AI-driven human–computer interactions. Our results show that it is feasible to make use of accessible technologies to realize the synthesis of speech commands, automated system tasks, and 3D holographic visualization in real time, thus opening routes toward more immersive, interactive, and engaging computing experiences.

Keywords:

Graphical Abstract

Novelty statement

A modular AI system integrating desktop control and real-time holographic projections for immersive, low-cost humancomputer interaction.